Views on Assessment over Time

| Action-oriented Approach|Plurilingualism|Tech-mediated

How views of assessment have changed 👁️🔄️

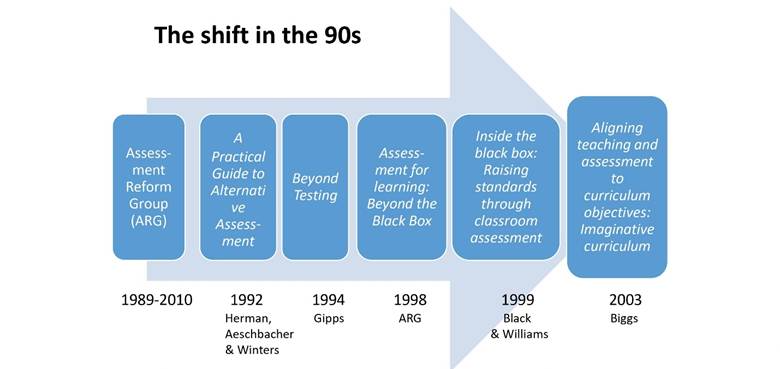

As mentioned in the ‘bite-sized’ section, views about assessment changed radically in the 1990s, which became very visible in the titles of books, as shown in the figure below:

The Assessment Reform Group made a distinction between Assessment of learning (summative) and Assessment for Learning – and a third category Assessment as learning was added soon afterwards. This third category (Assessment as Learning) has been described as follows:

“A distinction has also been made between assessment for learning and assessment as learning, the latter generally seeing students as self-regulating: i.e., monitoring, reflecting on and peer/self-assessing their own learning, and in so doing learning more about themselves as learners. The idea is to help learners to understand, interpret and act upon feedback, and so exercise their agency and decide their next step(s). This fits completely with the action-oriented philosophy of feedback and feedforward in dynamic learning situations that are rich in affordances:

An iterative process of drafting or rehearsal is advisable because people do not manage a masterly performance first time around. For the same reason, assessment in earlier phases should take the form of feedback and feedforward, encouraging attempts to use more complex language, not discouraging linguistic experimentation by penalising error. This may well require a radical shift of emphasis in the assessment culture of the institution, away from marks towards the creation of artefacts that learners can be proud of, perhaps building on the concepts of identity texts and language portfolios. (Piccardo & North, 2019, p. 280).” (CEFR Expert Group, p. 62)

The distinction between assessment for, as and of learning is summarised in the table below. As can be seen Assessment for Learning includes what a great language tester called the gentle art of diagnostic assessment. Assessment as Learning is very much related to strengthening learners’ metacognition, their self-regulation, their autonomy.

| Purpose of assessment | Nature of assessment | Use of information gathered |

| Assessment for learning is the process of seeking and interpreting evidence for use by learners and their teachers to decide where the learners are in their learning, where they need to go, and how best to get there (ARG, 2002: 2) | Diagnostic: To gather information before the teaching/learning process starts about proficiency level, knowledge and learning styles, to respond to students’ needs. | to determine what students already know in relation to expectations, in order for teachers to plan appropriate and realistic learning goals and to plan support if necessary |

| Formative : To gather information during the teaching/learning process. Can be more or less formal and ongoing | to monitor students’ progress, provide timely and targeted feedback, receive feedback on the instruction process | |

| Assessment as learning focuses on the explicit fostering of students’ capacity over time to be their own best assessors (Western and Northern Canadian Protocol, p. 42) | Formative: frequent and ongoing, with support, modelling, and guidance from the teacher | Used by students for peer-assessment, self-assessment and self-monitoring, reflect on their learning, foster autonomy |

| Assessment of learning focuses on the achievement level, has a reporting function (certifications, tests, reporting card). It often is high stake for learners | Summative: at the end of a period of learning. May be used to inform further instruction. | To provide a summary of the learning at a given time. To make a judgment, to assign a value, to report inside and outside the school. |

The concept of Dynamic Assessment is very much linked to that of Assessment as Learning, being also frequent and ongoing. It focuses on the process of learner development – and variability in that development – as well as mediated learning experiences (MLEs) and scaffolding, which the teacher/assessor gradually withdraws as the learner makes progress. Dynamic Assessment tends to involve test / retest, draft / redraft. The fundamental idea is that it is through understanding the learner’s modifiability that the teacher/assessor can design effective intervention and support. It requires a lot of attention for each individual student.

Validity ✅

The CEFR 2001 defines validity as follows:

A test or assessment procedure can be said to have validity to the degree that it can be demonstrated that what is actually assessed (the construct) is what, in the context concerned, should be assessed, and that the information gained is an accurate representation of the proficiency of the candidates(s) concerned.

Read Section 5.1.2 on Validity (pp.157-160) from the chapter on assessment from the book The CEFR in Practice, which is available on the CEFR website. This validity model is very relevant for language teachers for two reasons. Firstly, validity used to be something that one investigated statistically after a test had been taken; increasingly, however, language testers appreciate that a good assessment task will be similar to a good learning task: it should be educationally worthwhile. Secondly, all the features of the model are just as important for classroom assessment are they are for formal tests; the difference is in the rigour of the procedures one should undertake to ensure them.

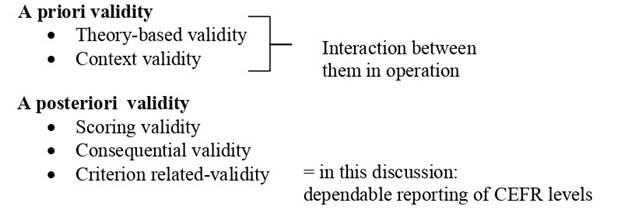

Section 5.1.2 explains Weir’s model of a priori validity (based on theory and on appropriateness to the context) as well as more traditional a posteriori validity, which is simplified below.

Weir’s (2025) Validity Model

There are several other current validity models, the most important of which are those from Messick and Kane.

Historically, validity was considered separately from reliability and feasibility in context. People talked of a trade-off between validity and reliability. Formal tests tended to chase reliability, which meant narrowing the construct (what is assessed) by excluding what statisticians call ‘noise’—the messiness of real people and real lives. This led to very long multiple-choice tests, whose validity could be challenged on the grounds that they had no connection to real language use. Validity was divided up into different types like construct validity (is the test assessing what it should?), content validity (does it reflect the course, if there was a course?), concurrent validity (does it correlate to other tests of this construct?), face validity (does it look like a good test; do people accept it as a good test?), and finally predictive validity (does it help us identify people who will be successful in the target situation when using the language?).

Messick pointed out that all these types of validity—and reliability, now called ‘scoring validity,’ which Weir later adopted—are all part of construct validity, which he defined as

“…an integrated evaluative judgment of the degree to which empirical evidence and theoretical rationales support the adequacy and appropriateness of inferences and actions based on test scores or other modes of assessment.” (Messick, 1989, p. 13)

Kane put the emphasis in validity on building an argument to support the interpretation of the results of an assessment. This relies on a chain of inferences: 1) Scoring: the performance observed, which produces a score; 2) Generalization to what he called a ‘universe score; 3) Extrapolation to “real-world performances” (Kane, 2013, p. 27)[6]; 4) Theory-based interpretation; and 5) Implications / utilisation, including aspects like relevance, utility, intended consequences, and sufficiency. The result is a series of claims (‘warrants’) each with a systemic ‘backing’ that builds the argument. In the adaptation to language testing (Bachman, 2005), much emphasis has been put on the final inference, concerning relevance, utility, consequences, and sufficiency, and the term ‘consequential validity’ has been coined – and used in the language field outside language testing.

Recent developments ⚙️➡️

Assessment as Learning, Dynamic Assessment and more sophisticated views of validity (especially regarding context and consequences) are examples of the way in which, in line with sociocultural/socio-cognitive and situated views of learning, researchers are starting to go beyond narrow conceptualizations of proficiency as language-based or task-based performances towards assessment of new and more complex constructs. Already in the early 1990s there are moves towards the assessment of integrated skills in problem-solving activities, which have become increasingly set increasingly in ‘authentic’ real-life contexts with conditions and constraints, mirroring the developments in pedagogy with the action-oriented approach.

Increasingly, in innovative assessment procedures, the concept of Assessment as Learning is expanded to include a requirement that the assessment itself should be a worthwhile educational experience in its own right – an activity in which the student learns. In such approaches, candidates need to actively develop understandings through source materials and instructions provided, with assistance or collaboration with peers – and are expected to use these understandings in problem resolution.

Scenario based assessment (SBA) is an example of such a development, linked to the action-oriented approach. SBA involves:

“A description of a coherent set of naturally occurring, imaginary scenes in a scenario narrative in which a test taker, interacting with simulated peers online, works collaboratively to bring the overarching scenario goal to a performative resolution. Given that scenarios are surrogates for real-life situations, they are used to create a context that requires examinees to use all the resources at their disposal (e.g., mental, social, linguistic, affective) to work through a carefully sequenced set of tasks to achieve the desired goal. Scenarios are naturally flexible in that they can be engineered to present straightforward scripts with few complications or surprising elements. Conversely, they can be engineered to present storylines with unexpected turns such as novel insights, shifts in values or perspectives, feedback, and so forth. Similar to real-life conditions, just about anything can be manipulated to create dynamic and engaging scenarios.” (Purpura, 2019)

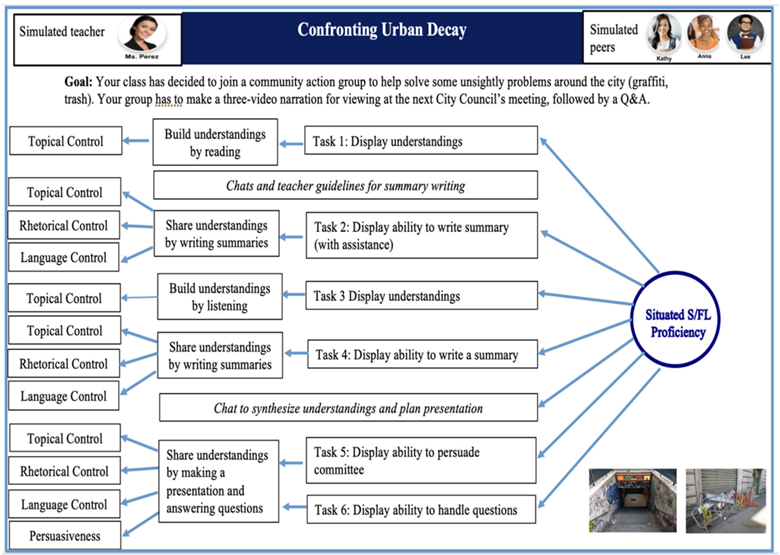

The scenario is not only intended to engage examinees in a problem they might actually want to solve, but also, echoing the idea of assessment as learning, “is designed to provide examinees with a worthwhile educational experience in its own right” (Purpura, 2021, A74). The figure below comes from Purpura’s 2021 article and shows a more conventional integrated skills test task transformed into scenario-based assessment.

As is often the case with action-oriented scenarios there is a phase in which the candidate builds an understanding with source material (written and spoken), and after summarizing this understanding they collaborate to synthesize what they know and to plan a presentation. There is a culminating task in which they make the presentation and answer questions.

A real example comes from the examination offered by the association of the French university language centres CLES (Certificat de compétences en langues de l’enseignement supérieur). First a scenario is designed with all input documents (videos, audio texts, written texts) related to the topic. After gaining an understanding of the issue and the mission to be completed, the candidates (who are tested in pairs) summarize the information on the issue in a neutral written report. This is followed by an interaction between the two candidates in which they each defend a point of view but need to reach a compromise.

An example of a 2011 CLES scenario involves the cashless society. Enacting the role of a student representative for their university, the candidate has to use the information from the source texts to write a report for the Student Council evaluating the benefits and drawbacks of the proposal by the town administration for the town to go cashless. They then take part in a debate with the other candidate, defending a particular position. Their mission is to use information from the source documents to discuss, negotiate, and reach a compromise according to the role that has been assigned to them.Now that you have got a good idea of current developments in language assessment, why not round off this information sheet by reading Purpura’s article: A rationale for using a scenario-based assessment to measure competency-based, situated second and foreign language proficiency.

AALE Toolkit Homepage | Action-oriented Approach | Plurilingualism | Tech-mediated | AALE Infosheets Exploration